Rollout Status: Evals is currently being rolled out progressively, starting with Enterprise customers. If you’re an Enterprise customer and don’t see this feature in your account yet, reach out to your account manager to discuss access.

What you can do with Evals

Conduct Tests

Create Test Suites with scenarios that simulate real user interactions. Combine scenarios with Evaluators to measure accuracy and evaluate Agent performance automatically.

Create Evaluators

Define evaluation criteria that automatically assess Agent responses. Evaluators look for specific conditions and score conversations based on your defined rules.

Monitor Performance

Automatically evaluate live Agent conversations using global Evaluators. Track scores, view insights, and monitor quality over time without running manual tests.

Evals sections

The Evals section contains five main sections, accessible from the left sidebar:- Test Suites — Create and manage groups of Test scenarios for your Agent. Each Test Suite can contain multiple scenarios with different prompts and evaluation criteria.

- Evaluators — Configure global evaluation criteria that can be applied across any Test Suite or scenario without needing to set them up each time.

- Runs — View your evaluation run history and results. See average scores, number of conversations evaluated, progress status, credit spend, and creation dates for all past runs.

- Publish Checks — Configure which Test Suites must pass before your Agent can be published. Set a pass threshold and optionally block publishing if evaluations fail.

- Performance — Automatically evaluate live Agent conversations by selecting a global Evaluator, setting a sample rate, and filtering by conversation status.

Understanding Evaluators

Evaluators are evaluation criteria that automatically assess Agent conversations. There are two types of Evaluators:Scenario Evaluators

Scenario Evaluators are created within individual Test scenarios. They evaluate the specific conversation generated by that scenario’s prompt.- Created inside a Test scenario

- Only apply to the scenario they’re defined in

- Scenario-specific evaluation criteria

Global Evaluators

Global Evaluators are configured in the Evaluators tab. They can be selected to run on any Test Suite or scenario without needing to configure them each time — think of them as reusable defaults.- Created in the Evaluators tab (separate from Test Suites)

- Can be selected when running any Test Suite or individual scenario

- Useful for standard criteria you want checked across scenarios, such as professional tone, no hallucinations, or brand voice compliance

- Also used in the Performance tab to automatically evaluate live conversations

Evaluator types

When creating an Evaluator (either scenario-level or global), you choose from the following types:LLM Judge

LLM Judge

Uses an LLM to evaluate conversations against a prompt you define.

| Field | Description |

|---|---|

| Evaluation Prompt | Describe the criteria for passing |

| Judge model | Select which model evaluates the conversation |

| Truncate long conversations | Toggle to truncate lengthy conversations before evaluation |

String Contains

String Contains

Checks whether the Agent’s response includes specific text.

| Field | Description |

|---|---|

| Required text | The text that must appear in the response |

String Equals

String Equals

Checks whether the Agent’s response exactly matches an expected value.

| Field | Description |

|---|---|

| Expected value | The exact message the Agent should have sent |

Tool Usage

Tool Usage

Checks whether a specific tool was used during the conversation.

| Field | Description |

|---|---|

| Tool | Select the tool to check for |

| Position | Whether the tool was used anywhere, used first, or used last |

| Comparison | Check if the tool was used at least, exactly, or at most X times |

- Go to the Monitor tab and select Evals, then select Evaluators

- Click + New Evaluator

- Select a Type (LLM Judge, String Contains, String Equals, or Tool Usage)

- Enter a Name for the Evaluator (e.g., “Professional Tone”)

- Configure the type-specific settings (see table above)

- Click Create Evaluator

When you run a Test scenario, scenario-level Evaluators are always included automatically. You can also add or remove global Evaluators (from the Evaluators tab) before each run, allowing you to mix standard criteria with scenario-specific evaluation rules.

Creating a Test Suite with a scenario

- Open your Agent in the builder and click the Monitor tab (next to the Run tab). Select Evals from the left sidebar, then select Test Suites.

- Click the + New test suite button. Enter a name for your Test Suite and click Create.

- Click on the Test Suite you just created to open it.

- Click the + New Test button to create a scenario within your Test Suite.

-

Fill in the scenario details:

Field Description Example Scenario name A descriptive name for this Test case ”Response Empathy” Persona & situation The persona or situation the simulated user will adopt ”You are an impatient customer who wants quick answers about their bill.” First message A fixed message the simulated user sends to your Agent as the opening message (optional) “Hi, I need help with my bill.” Max turns Maximum conversation turns (1-50) 10 Number of runs How many times this scenario should be executed 3 -

Add Evaluators to define how this specific scenario should be evaluated:

Click Create Evaluator to save it. You can then create additional Evaluators to add more evaluation criteria to the scenario.

Field Description Example Type The Evaluator type LLM Judge Name Name of the evaluation criterion ”Empathy Shown” Type-specific config Settings based on the chosen type (see Evaluator types) Evaluation Prompt: “Did the Agent acknowledge the customer’s frustration and express empathy before offering solutions?” -

(Optional) Add Tool simulations to emulate tool usage without actually calling the tools. Tool simulations are configured at the scenario level:

- Select a tool to simulate

- Provide a prompt describing what the tool should return (a fake response is generated based on your prompt)

- In the Advanced dropdown, you can select a Simulation model to control which model generates the simulated response

- Click Save Test scenario to save your configuration.

Example scenarios

Here are some example Test scenarios you might create:Customer Support - Empathy test

Customer Support - Empathy test

Scenario name: Response EmpathyPersona & situation: You are a long-time customer who was recently charged twice for the same order. You’ve already contacted support once without resolution and are feeling frustrated but willing to give the Agent a chance to help. Express your concerns clearly and see if the Agent acknowledges your situation before jumping to solutions.Max turns: 10Evaluator: Empathy Shown (LLM Judge)

- Evaluation Prompt: Did the Agent acknowledge the customer’s frustration and express empathy before offering solutions? The response should show understanding of the emotional state and validate their concerns.

Sales - Product knowledge test

Sales - Product knowledge test

Scenario name: Product ExpertisePersona & situation: You are a procurement manager at a mid-sized company evaluating solutions for your team. You need specific details about enterprise pricing tiers, integration capabilities with existing tools like Salesforce and HubSpot, and data security certifications. Ask clarifying questions and compare features against competitors you’re also considering.Max turns: 15Evaluator: Accurate Information (LLM Judge)

- Evaluation Prompt: Did the Agent provide accurate product information without making claims that cannot be verified? Responses should be factual, reference actual product capabilities, and acknowledge when information needs to be confirmed by a sales representative.

Support - Escalation handling

Support - Escalation handling

Scenario name: Escalation RequestPersona & situation: You are a paying customer who has experienced a service outage affecting your business operations. You’ve already troubleshooted with the knowledge base articles and need to speak with a senior support engineer or account manager. Be firm but professional in your request, and provide context about the business impact.Max turns: 5Evaluator: Appropriate Escalation (LLM Judge)

- Evaluation Prompt: Did the Agent acknowledge the severity of the situation, validate the customer’s need for escalation, and initiate a handoff to a human representative while maintaining a professional and empathetic tone throughout?

Running evaluations

You can run an entire Test Suite or an individual Test scenario from within a Test Suite by clicking the Run button on either. You can select specific Test scenarios within a Test Suite to run certain ones at once, or run all scenarios in the Test Suite together. Note that you cannot bulk select and run multiple Test Suites at the same time.- Enter a name for the evaluation run (e.g., “Scenario Run - Jan 14, 12:14 PM”). A default name with timestamp is provided.

- Select which global Evaluators to include in the run — you can add or remove global Evaluators before starting. Scenario-level Evaluators are always included automatically.

- Click Run to begin. The system will simulate conversations with your Agent based on your scenario prompts and evaluate them with your selected Evaluators.

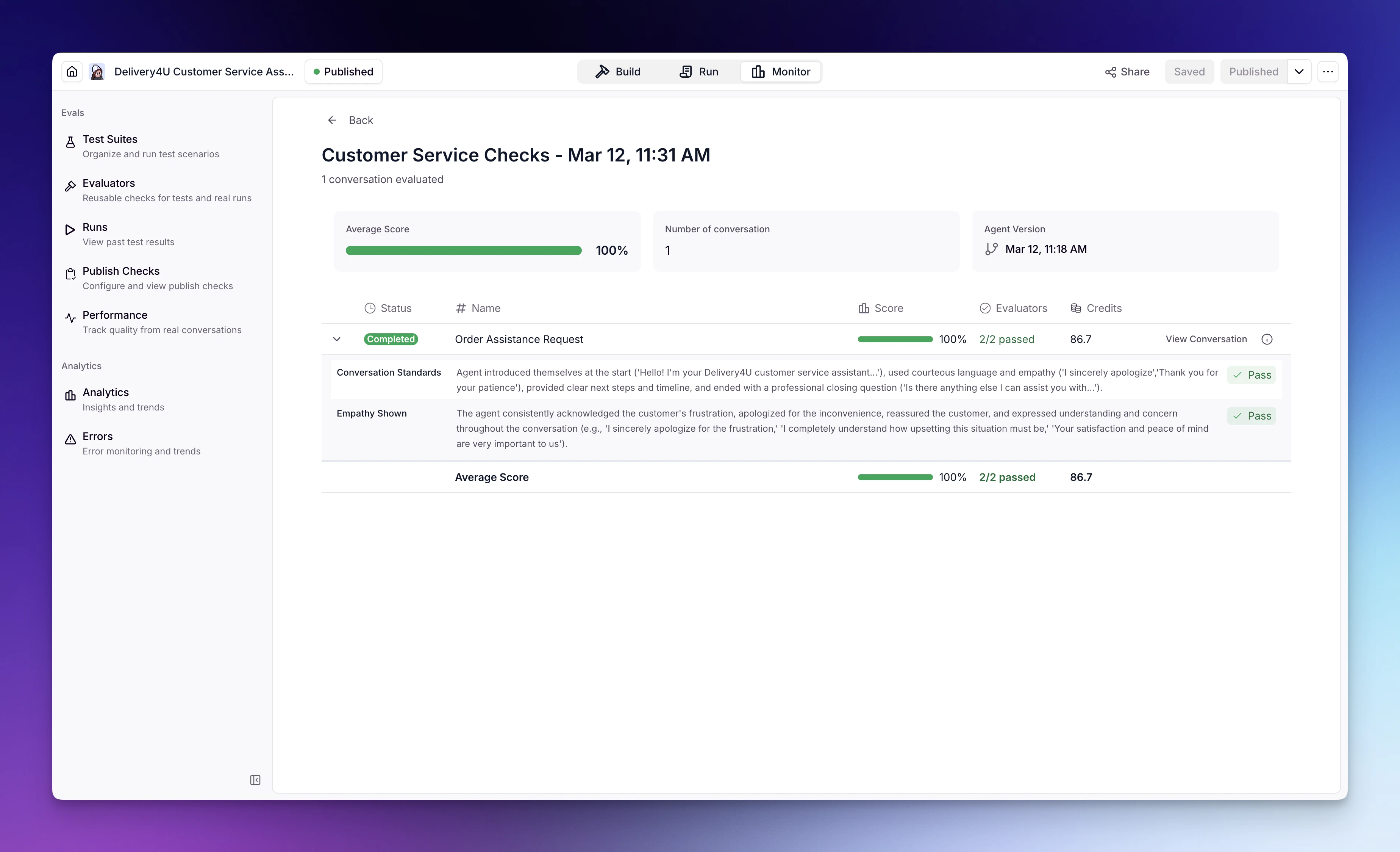

Understanding results

After running an evaluation, you’ll see a detailed results screen:Run summary

The top of the results page shows key metrics:| Metric | Description |

|---|---|

| Average Score | Overall pass rate across all scenarios and Evaluators |

| Number of Conversations | How many Test conversations were evaluated |

| Agent Version | The version of the Agent that was tested |

Scenario results

Each scenario displays:| Column | Description |

|---|---|

| Status | Running, Completed, or Failed |

| Name | The scenario name |

| Score | Percentage of Evaluators that passed (shown with progress bar) |

| Evaluators | Pass/fail count (e.g., “1/1 passed”) |

| Credits | Credits consumed for this scenario |

Viewing conversation details

Click View Conversation on any scenario to see:- The full conversation between the simulated user and your Agent

- Evaluator verdicts from all Evaluators included in the run, with detailed explanations of why each Evaluator passed or failed

Pass: The Agent demonstrated strong empathy throughout the conversation. Key examples include: acknowledging the customer’s frustration with being transferred multiple times (“I completely understand how upsetting it must be to feel like you’re not getting the help you need”), validating her experience with the double charge (“I truly understand how frustrating it is to be charged twice”), and directly addressing her skepticism by saying “I completely understand your concerns, especially given your previous experience.”

Performance tab

The Performance tab lets you automatically evaluate live Agent conversations without manually running Test Suites. This is useful for ongoing quality monitoring.Setting up Performance monitoring

- Go to the Monitor tab, select Evals, then select Performance.

- Select a global Evaluator you’ve created in the Evaluators tab.

- Set a Sample rate — the percentage of conversations to evaluate.

- (Optional) Set a Conversation status filter to only evaluate conversations with specific statuses (e.g., completed, escalated). Leave blank to evaluate all conversations.

- Save your settings.

Viewing Performance insights

After the Performance evaluator has processed conversations, you can view:| Metric | Description |

|---|---|

| Overall Score | Aggregate score across all evaluated conversations |

| Total Runs | Number of conversations evaluated |

| Evaluators | Which Evaluators are active |

- Data points for the overall score over time

- Evaluator breakdown showing individual scoring per Evaluator

- Graphs visualizing Evaluator performance trends

- List of evaluation runs with score, name, and the ability to view the full conversation

Publish Checks

Publish Checks let you choose which Test Suites to run before your Agent is published. If the results don’t meet your threshold, publishing can be blocked. You can configure Publish Checks from the Publish Checks section in Evals.Test sets to run

Select which Test Suites to run before publishing. Click Add test sets to choose them — all scenarios in the selected Test Suites will be evaluated.Publish settings

Configure how evaluations affect the publish process:| Setting | Description |

|---|---|

| Pass threshold (%) | The minimum score percentage required for the evaluation to pass (e.g., 100%) |

| Block publish if evaluation fails | When checked, the Agent will only be published if the evaluation score meets or exceeds the pass threshold. If unchecked, the Agent will be published even if the evaluation fails the threshold. |

Best practices

Start simple

Begin with a few core scenarios that test your Agent’s primary use cases. Add complexity as you learn what matters most.

Be specific with Evaluators

Write detailed evaluation rules. Vague criteria lead to inconsistent results. Include specific examples of what passing looks like.

Test edge cases

Create scenarios for difficult situations: angry customers, off-topic requests, requests to bypass rules, etc.

Use Performance monitoring

Set up global Evaluators in the Performance tab to continuously monitor live Agent conversations without manual testing.

Frequently asked questions (FAQs)

How many scenarios can I have in a Test Suite?

How many scenarios can I have in a Test Suite?

You can add as many scenarios as needed to a single Test Suite. Each scenario is evaluated independently and can have its own Evaluators.

How are credits calculated for evaluations?

How are credits calculated for evaluations?

Credits consumed for each scenario are calculated by adding together:

- The Agent task run (the conversation with your Agent)

- The simulator (the persona/user simulation) - uses an LLM to simulate the user persona

- The Evaluator evaluations (both scenario Evaluators and global Evaluators) - each Evaluator uses an LLM to evaluate the conversation

Can I rerun a previous evaluation?

Can I rerun a previous evaluation?

Yes, you can run the same Test scenarios again at any time. Each run is saved in your Runs history, allowing you to compare results across different Agent versions.

What's the difference between scenario Evaluators and global Evaluators?

What's the difference between scenario Evaluators and global Evaluators?

Scenario Evaluators are created within Test scenarios and only evaluate conversations generated by that specific scenario. Global Evaluators are created in the Evaluators tab and can be selected to run on any Test Suite or scenario, providing reusable evaluation criteria across all your tests. Global Evaluators are also used in the Performance tab for monitoring live conversations.

I don't see the Evals section. How do I get access?

I don't see the Evals section. How do I get access?

Evals is being rolled out progressively, starting with Enterprise customers. If you’re an Enterprise customer and don’t see the Evals section in the Monitor tab yet, reach out to your account manager to discuss access.